|

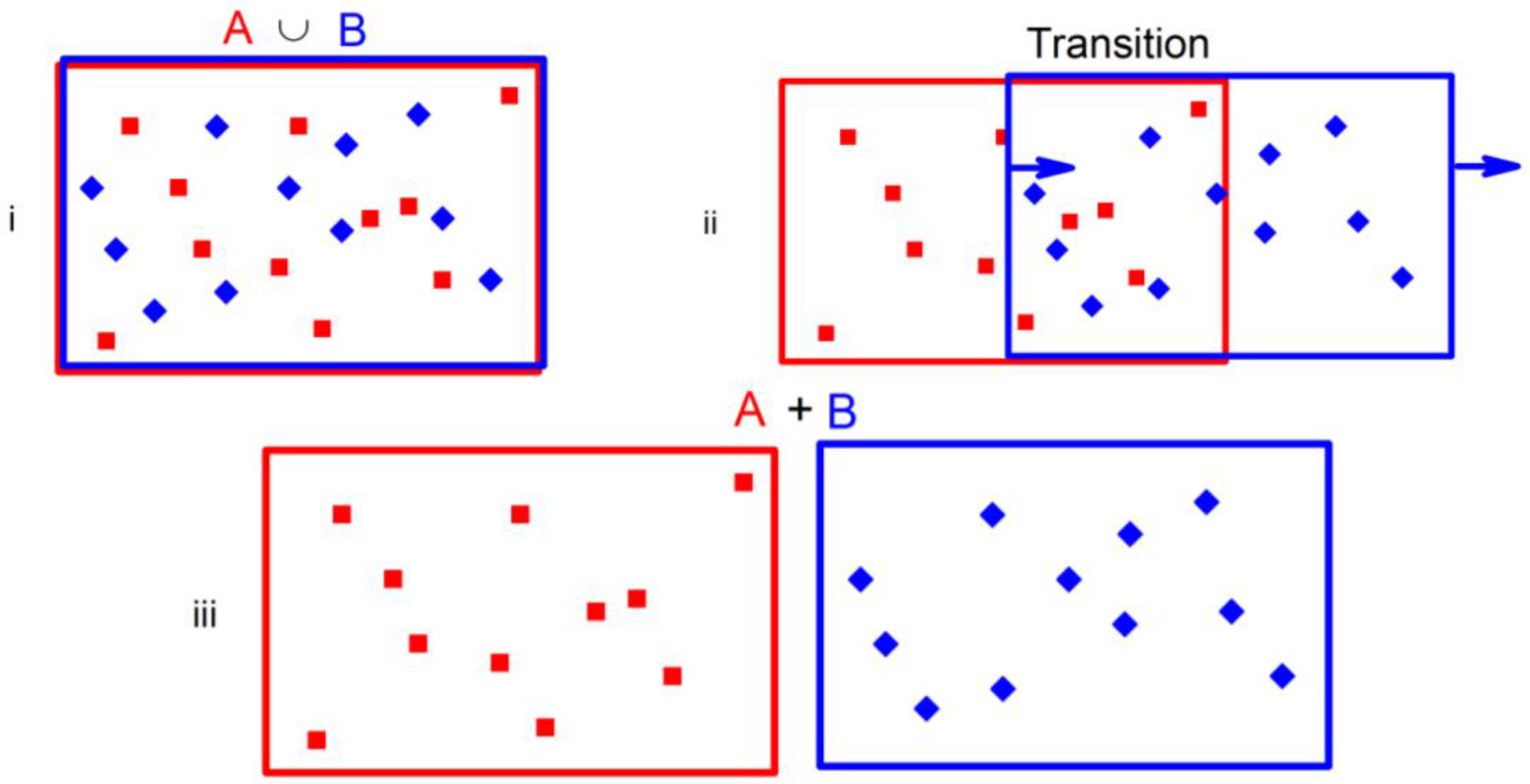

Moreover, in psychotherapy, the client needs to be aware of his entropy, the inner conflicts that he is experiencing, so he can be more in charge of it. The entropy of a room that has been recently cleaned and organized is low. Entropy is an easy concept to understand when thinking about everyday situations. Entropy is a measure of the degree of randomness or disorder of a system. One of Jung’s patients aptly stated, “I don’t know what you are going to do with me, but I hope you are going to give me something that is not gray”. There is a tendency in nature for systems to proceed toward a state of greater disorder or randomness. Such energy dispersal is necessary for the "color" to happen in one’s life. For instance, Carl Jung, a Swiss psychiatrist and psychoanalyst, emphasized the importance of psychology entropy by saying, “there is no energy unless there is a tension of opposites”. For the subsequent description, take :math:X to be the source, :math:Y the target. This implementation of TE allows the user to condition the probabilities on any number of background processes, within hardware limits of course. In psychology, entropy refers to sufficient tension for positive change to transpire. These two forms are sometimes called apparent and complete transfer entropy, respectively ( Lizier2008).

In cities where there are sufficient cell towers, there is ample energy to sustain the signals, hence, the entropy is low. This is manifested as choppy communication, dropping calls, and too much static. In information technology, entropy happens when there is an unpredictable signal in the cybernetics system. target) > output.backward() > Example of target with class. Hence, “entropy” happened as the coffee lacked enough thermal energy for you to drink it, for “order” to ensue. This criterion computes the cross entropy loss between input logits and target. However, you forgot about it and when you came back to drink it, it was already too cold. You then left it on your desk to attend to something else. For instance, you bought a good cup of hot coffee and it took an “organized thermal energy” to make it. Here is a function to do this in Python: import numpy as np. Despite their similarities, the theoretical ideas behind those techniques are different but usually ignored. It involves summing Plog (p) with base 2, for all the possible outcomes in a distribution. Approximate Entropy and Sample Entropy are two algorithms for determining the regularity of series of data based on the existence of patterns.

In physics, it is a measure of a system’s energy diffusion it is a thermodynamic quantity for the unavailable thermal energy needed to achieve mechanical work. Above is the formula for calculating the entropy of a probability distribution. Entropy describes the quantity of ambiguity and disorganization within a system.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed